The Invisible Multiplication Effect

British universities have embraced artificial intelligence and machine learning with remarkable enthusiasm, deploying these tools across disciplines from climate science to genomics. Yet this computational revolution masks a fundamental weakness: the systematic amplification of measurement errors that originate from poorly maintained laboratory equipment and inconsistent protocols.

Unlike human researchers who might notice anomalous readings or question unexpected patterns, machine learning algorithms accept input data as authoritative truth. When that data emerges from miscalibrated spectrometers, drift-prone sensors, or inadequately standardised procedures, the resulting analyses compound these errors in ways that become virtually impossible to detect.

Case Study: Climate Modelling Gone Astray

The Met Office's regional climate prediction models rely heavily on temperature and precipitation data collected by university weather stations across Britain. A recent audit revealed that approximately 30% of these stations had not undergone calibration verification in over two years, with some instruments showing systematic biases of up to 0.8 degrees Celsius.

When this biased data feeds into machine learning algorithms designed to identify long-term trends, the results appear statistically robust whilst being fundamentally flawed. Dr Rebecca Thomson from the University of Edinburgh's atmospheric science department explains: "The algorithm sees patterns that aren't really there, or misses genuine signals buried in instrumental noise. We're essentially teaching our computers to perpetuate our measurement mistakes."

Photo: University of Edinburgh, via edinphoto.org.uk

Photo: University of Edinburgh, via edinphoto.org.uk

Genomics and the False Precision Problem

British genomics research faces similar challenges as laboratories upgrade to automated sequencing platforms without corresponding investments in quality control infrastructure. The Human Genetics Commission recently highlighted cases where systematic errors in DNA preparation protocols created artificial genetic variants that machine learning systems confidently classified as medically significant.

Professor Michael Stratton from the Wellcome Sanger Institute describes the scale of the problem: "We're generating terabytes of genetic data daily, but if the underlying biochemistry is inconsistent, we're just creating very large databases of systematic error. The AI doesn't know the difference between a real mutation and a laboratory artefact."

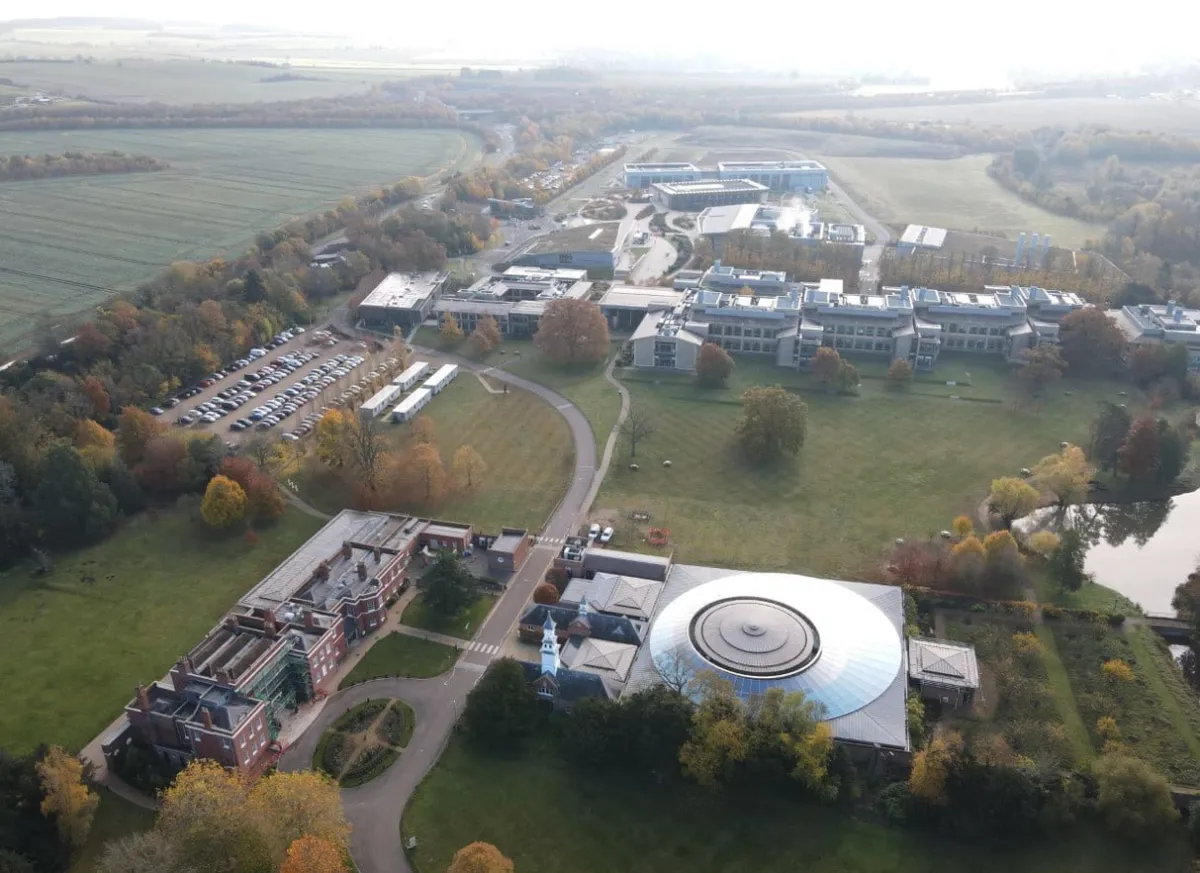

Photo: Wellcome Sanger Institute, via www.hutton-group.co.uk

Photo: Wellcome Sanger Institute, via www.hutton-group.co.uk

These false discoveries can have serious implications for medical research and patient care. When machine learning algorithms identify spurious genetic markers associated with disease risk, the resulting clinical guidelines may recommend unnecessary treatments or screening programmes.

Materials Science and the Reproducibility Crisis

Britain's materials science laboratories increasingly rely on automated characterisation equipment coupled with AI analysis to identify promising new compounds. However, electron microscopes, X-ray diffractometers, and other sophisticated instruments require regular calibration using certified reference materials that many departments cannot afford to replace frequently.

Dr James Peterson from Imperial College London's materials department has documented how subtle calibration drift can lead machine learning systems to classify identical samples differently depending on when they were analysed. "The algorithm learns to recognise the instrument's quirks rather than genuine material properties," he explains. "We end up with models that work perfectly in our laboratory but fail completely when applied elsewhere."

Photo: Imperial College London, via www.e-architect.com

Photo: Imperial College London, via www.e-architect.com

The Institutional Response Gap

British funding bodies have been slow to recognise the implications of this data quality crisis. Research Excellence Framework assessments continue to reward publication volume and computational sophistication whilst giving minimal weight to measurement integrity or reproducibility. This creates perverse incentives for researchers to prioritise algorithmic complexity over fundamental experimental rigour.

The Engineering and Physical Sciences Research Council recently acknowledged the problem in its strategic review, noting that "the rapid adoption of AI-driven analysis methods has outpaced the development of appropriate quality assurance frameworks." However, concrete policy responses remain limited.

International Competitiveness at Stake

Britain's scientific reputation increasingly depends on the reliability of its research outputs, particularly as international collaborations become more data-intensive. When British laboratories contribute systematically flawed datasets to global research consortia, the resulting publications may be retracted or discredited, damaging the UK's scientific standing.

The recent controversy surrounding British contributions to the Global Climate Observing System illustrates this risk. Several European partners questioned the quality of UK atmospheric data after machine learning analyses produced inconsistent results compared to other national datasets.

Technological Solutions and Human Oversight

Some British institutions are pioneering approaches to address these challenges. The University of Cambridge has implemented "algorithmic auditing" procedures that require researchers to validate machine learning results using independent measurement techniques. Similarly, Oxford's chemistry department mandates regular blind testing of automated instruments using known standards.

These initiatives demonstrate that technological solutions exist, but they require sustained investment and cultural change within research institutions. The challenge lies in convincing researchers that measurement quality is as important as analytical sophistication.

Regulatory Implications

The implications extend beyond academic research into regulatory science and policy development. When government agencies rely on university research to inform environmental regulations, drug approvals, or safety standards, systematic measurement errors can compromise public protection.

The Food Standards Agency recently revised several nutritional guidelines after discovering that supporting research was based on systematically biased analytical data. This incident highlighted the need for more rigorous quality control in research that informs public policy.

A Call for National Standards

Britain requires a coordinated response to this emerging crisis. The proposed National Data Quality Initiative would establish mandatory calibration standards for research equipment, create shared reference material programmes, and develop AI systems capable of detecting systematic measurement errors.

Such an initiative would require collaboration between universities, government agencies, and industry partners. The investment would be substantial, but the cost of continued systematic error amplification could be far greater, potentially undermining decades of scientific progress and public trust in evidence-based policy.

The time has come for Britain's scientific community to acknowledge that computational sophistication cannot compensate for fundamental measurement weaknesses. Only by addressing these underlying quality issues can the nation maintain its position at the forefront of global research excellence.